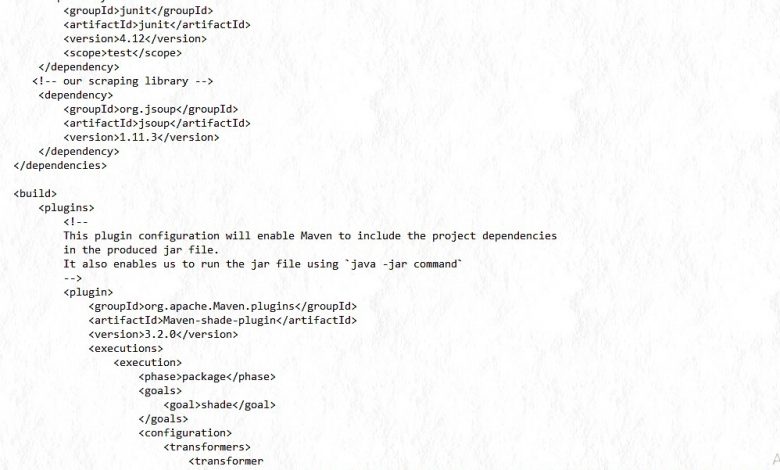

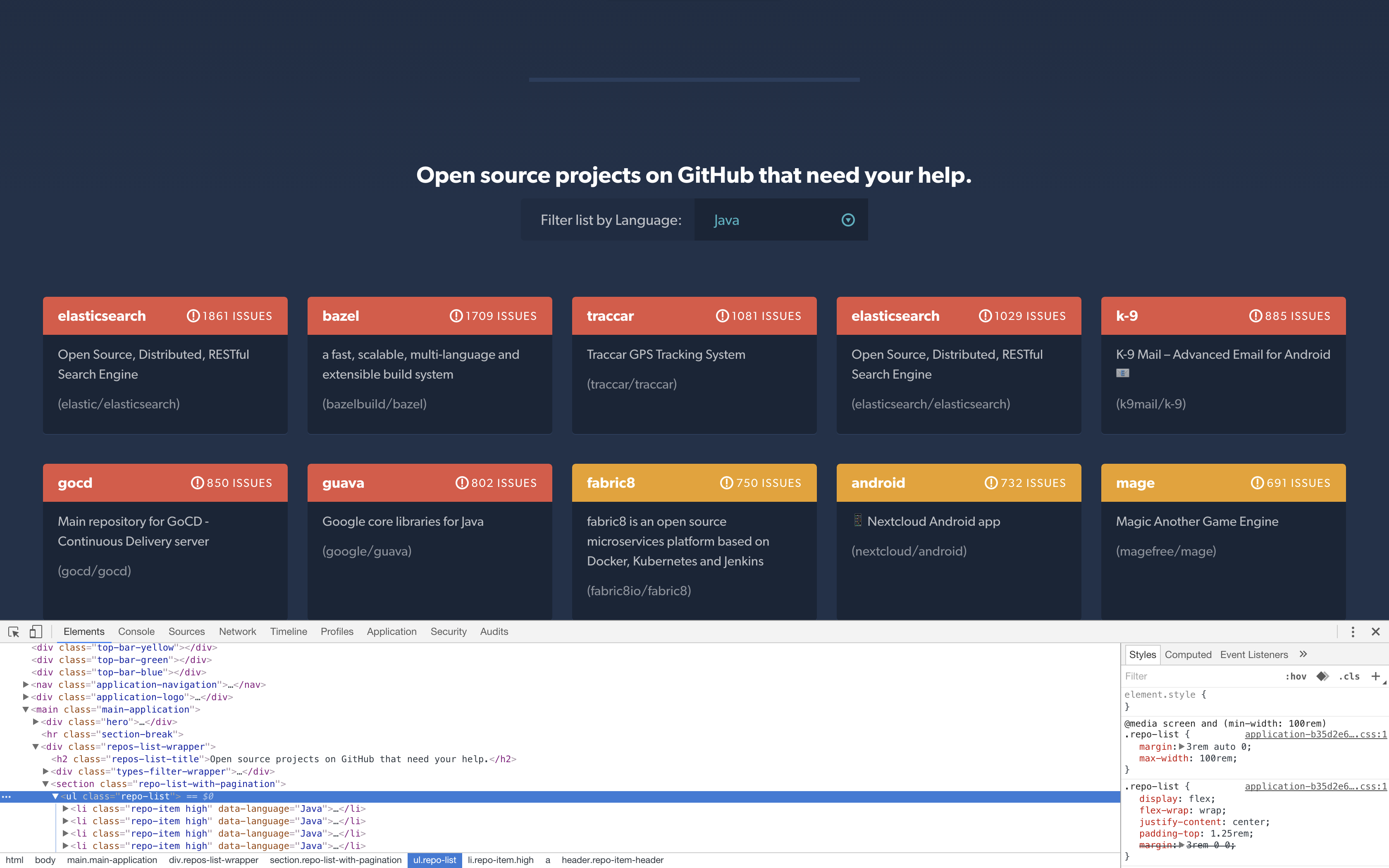

It is fast and effective in data extraction and analysis. It is used to shred HTML and XML documents and allows you to extract tags and text from these documents. This library is used to pull and analyze data from web pages. Beautiful Soupīeautiful Soup is the most popular web scraping library among Python libraries. These libraries are the most preferred web scraping libraries by developers. In this section, we will examine 5 Python web scraping libraries. Most Popular Web Scraper Libraries to Extract Data in Python Since there are multiple libraries in Python, it is possible to try alternatives easily. Python web scraping libraries are open source so you can be a part of the community. Adjusting the configurations in many matters belongs to the developer. On the other hand, performing web scraping using a Python library is completely free. Its structure is quite simple and it can also provide location-based scraping service. Because it integrates easily into all programming languages. In addition, it is easier to use in applications with distributed software architecture. This service allows users to create automated web scraping processes without additional development. Chief among these is the proxy pool and automatic rotation of IP addresses. Using a Python library or using a web scraper API.Ī popular web scraper API like Zenscrape provides businesses with many services without additional development. There are two most popular methods for developing web scraping applications. Which Method Should Be Preferred for Web Data Extraction? Next, we’ll review the most popular Python web scraping libraries. In this article, we will talk about which method should be preferred for which situation. However, these methods have advantages and disadvantages over each other. Many data extraction options exist, such as web scraping API, a Python library, or manual scraping data. Data can be scraped easily with a web scraping API or any library in the Python programming language. It is now easier and faster to obtain data from websites. It is frequently used by many businesses, platforms, and especially artificial intelligence projects. For this reason, web scraping has become one of the most popular topics today. If not as code, then at least as an approach to getting data from web pages programatically.Data has recently become the need of almost every business. No jar release or maven dependency is published yet – if one needs it, it has to be checked-out and built. It also doesn’t handle distributed running of scraping tasks – this you should handle yourself. The library depends on htmlunit, nekohtml, scala, xml-apis and some more, visible in the pom. The result is a list of documents (there are two methods – one returns a scala list, and one returns a java list). When you have an ExtractorDescriptor instance ready (for java apps you can use the builder to create one), you can create a new Extractor(descriptor), and then (usually with a scheduled job) call extractor.extractDocuments(since) Other configurations, like javascript requirements, whether scraping should fail on error, etc.Simple “heuristics” – if you know the URL structure of the document you are looking for, there’s no need to locate it via XPath.Date format, for the date of the document optionally regex can be used, in case the date cannot be strictly located by XPath.

There’s a different expression depending on where the information is located – in a table or in separate details page XPath expressions for elements, containing meta data and the links to the documents.There are 4 options, depending on whether there’s a separate details page, whether there’s only a table and where the link to the document is located how is the document reached on the target page.

The type of document (PDF, doc, HTML) and the type of the scraping workflow – i.e.You can put a placeholder which will be used for paging Target URL, http method, body parameters (in case of POST).

The class used to configure individual scraping instances is ExtractorDescriptor. The point is to be able to specify scraping only via configuration. It is now in a more or less stable form, I’ve already deployed the application and it works properly, so I’ll just share a short description of the functionality. It can probably be extended to do that, but for now I’d like it to be more (state)-document / open-data oriented, rather than a tool for commercial scraping (which is often frowned upon). It is meant for scraping documents, rather than random data. The project is written in Scala, and can be used in any JVM project (provided you add a scala jar dependency). It can be found as a separate module within the main project. And instead of writing code specific for each site, I decided to try creating a “universal” document scraper. As part of a project I’m working on, I needed to get documents from state institutions.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed